So far, the testing that I’ve discussed is how to optimize a communication or overall messaging. The next step is trying to answer fundamental questions about the nature of your program – things like how many times to communicate and through what means.

There is a pretty good chance that you are not communicating enough to many of your constituents.

But wait, you say. We send out a mail piece a month, have multiple telemarketing cycles per year, and have both a monthly e-newsletter and semi-frequent emails on other topics. Our board members and staff who are on our seed lists are consistently on me, you say, that we are communicating too much. And we get donors who complain that they are getting a mail piece before their last one was acknowledged.

However, remember in the discussion of segmentation that more donors are saying their nonprofits are undercommunicating, not over. That means that the average number profit needs to be communicating more than it is.

And the concern that you are annoying people with asking for money comes from an oft-quoted and concerning inferiority complex from the nonprofit. We have to believe that we are good enough to merit a gift and making an appropriate ask to be effective. We want to give our donors an opportunity to be a part of something powerful and transformative. Remember that if we do our jobs well, donating to our organization is a positive experience.

So how would you test whether you are communicating often enough/too often? The first step is to figure out where you are as a control with a cross-medium communications calendar. This is easy said than done, but it’s a necessary first step. This need not be perfect; as you are going to want to have some communications that are timely and focused on current events, you may have to have some placeholders in place that simply indicates “we’re going to email something here.”

Then split test your file and test, so that part of your file gets X communications and another gets X plus or minus 1. I’d suggest plus. Then measure the total success of the communications.

I once helped lead a test where we took mail pieces out of our schedule during membership recruitment. We would send a piece or two, then wait to see if those donors would donate before sending to them against to make sure that we were addressing them properly as either a renewed donor or as someone who has not yet renewed. Each individual piece in the resting membership series had a significantly better ROI and better net than the more consistent appeal series.

Yet the appeal series brought in more money for the organization and the mission overall. I would argue, as I did at the time, this is the actual important metric. If you want to look at metrics like ROI or response rate, your best opportunity is to send one letter to your single best donor – you’ll get a 100% response rate and ROI percentages in the tens of thousands or more.

But for real life, the goal is more money for more mission. So overall net is the metric of choice.

The easiest campaigns to add to are the ones that already have a multistage component. Let’s say you have a matching gift campaign that goes mail piece 1, email 1, mail piece 2, email 2 (with two weeks between each). A way of testing up would be to look at doing mail piece 1, email 1 + mail piece 1.5, mail piece 2 + email 1.5, email 2 (so there’s still two weeks between each set of communications, but they double up in the middle). That would be adding a mail piece and an email and if you test both of these with net as your goal, you will have a better framework for the campaign in the following year as well as for additional testing throughout the year.

With email only campaigns, there’s another way of checking whether you are over-emailing your file – looking to see if your total opens and clicks fall. There is a point at which open rates and click rates will begin to fall; however, you shouldn’t worry too much until adding another email not only lowers your open and click rates but lowers your total number of opens and clicks (similar to a focus on total net, rather than net per piece).

This tipping point in email is probably well past where you think it is. Hubspot did a study of emails per month on both open and click-through rates. The sweet spot with the highest open and click rates was between 15 and 30 email per month.

That’s right – opens and clicks went up until you got in the range of daily emails. Things went downhill after 30 days. So if you are sending more than daily emails (on any day but December 31 or the last day of a matching opportunity), you might be emailing too much – so take that as a cautionary tale for the .0001% of you who are doing this. For the other 99.9999%, hopefully this will give support for the business case for testing up on your emails.

There are three tricks to cross-platform testing:

- There is a whole science of attribution testing. If you have the ability to look at this literature and your data systems will support this, go for it. However, most organizations of my experience don’t have all of their data in the same place initially, making this exceedingly hard. Thus, this sort of testing up/down for cadence should look at sources of revenue by audience test panel rather than through what medium the donation is made. You may be surprised how much adding a mail piece increases your online revenue or adding a telemarketing cycle boosts the mail piece.

- Unlike with strictly piece-based attributes, I’d argue you have to test every cell here because there are interactions among the means of communication. It may be that mail + mail is better than mail and mail + phone is better than mail, but that when you have mail + phone + mail, you have diminishing returns that don’t compensate for doing both mail pieces.

- You will have to be vigilant about the creation of your testing cells.

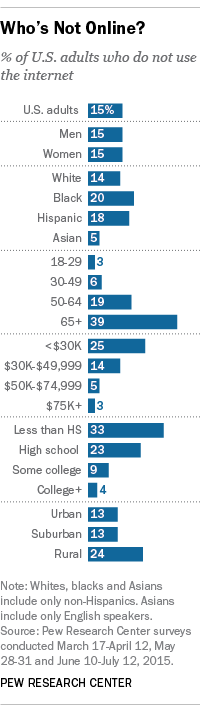

As much as you would like to call everyone who has a phone number or email everyone who has an email address, and use those who don’t have a phone number or email on file as a control audience, those are different types of donors. Pew has a great summary of the non-Internet users of the US at right. Even if you looked just at the age and income variables, you can see how this would make your control audience look very different from your non-control.In reverse, 66% of 25-29 year olds live in houses where there is no landline, compared with 14% of 65+ year olds, according to the National Center for Health Statistics.

As much as you would like to call everyone who has a phone number or email everyone who has an email address, and use those who don’t have a phone number or email on file as a control audience, those are different types of donors. Pew has a great summary of the non-Internet users of the US at right. Even if you looked just at the age and income variables, you can see how this would make your control audience look very different from your non-control.In reverse, 66% of 25-29 year olds live in houses where there is no landline, compared with 14% of 65+ year olds, according to the National Center for Health Statistics.

So, if you think of the average person for whom you have a phone number, but not an email address, that person looks very different from the one where you have an email address, but not a phone number. Thus, you have to either control for all demographic variables in your assessment (hard) or split test people by means of communication that you have available. (marginally easier)

Thanks for reading and be sure to let me know at nick@directtodonor.com what future topics you’d like to see.

This is a fine way to test if there’s only one thing you want to learn at a time. You can refine your program this way, getting better and better. This is the direct marketing equivalent of kaizen – the practice of continual improvement popularized in manufacturing, but now applies to much strategic thinking.

This is a fine way to test if there’s only one thing you want to learn at a time. You can refine your program this way, getting better and better. This is the direct marketing equivalent of kaizen – the practice of continual improvement popularized in manufacturing, but now applies to much strategic thinking.